Small Language Models, Big Impact: Why Efficiency is Beating Scale in 2026

Small Language Models, Big Impact: Why Efficiency is Beating Scale in 2026

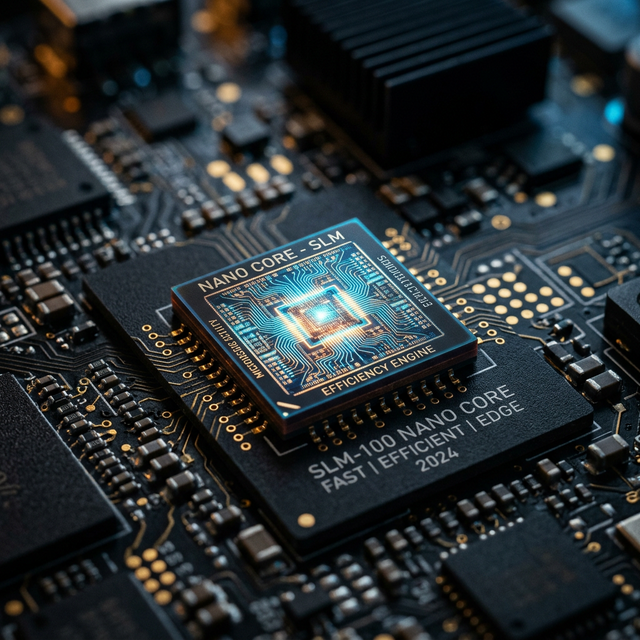

The AI industry is experiencing a counterintuitive trend: smaller models are winning. While headlines once focused on ever-larger models with hundreds of billions of parameters, 2026's breakthrough story is how compact, efficient models are delivering superior real-world value.

The Efficiency Revolution

For years, the AI community assumed bigger was always better. That paradigm is crumbling as small language models (SLMs) with 1-10 billion parameters match or exceed the practical performance of models 10-100 times their size.

Why Small Models Are Winning

Speed and Responsiveness

Small models generate responses in milliseconds rather than seconds. For interactive applications, this responsiveness dramatically improves user experience. Users notice the difference immediately.

Cost Efficiency

Running a 7B parameter model costs a fraction of what a 70B model requires. For businesses processing millions of requests, this translates to massive savings—often reducing AI infrastructure costs by 90% or more.

Deployment Flexibility

Small models run on consumer hardware: laptops, mobile devices, even edge computing devices. This enables AI features in environments where cloud connectivity is unreliable or prohibited.

Environmental Impact

Smaller models consume less energy for training and inference. As sustainability becomes a priority, efficient models offer a more environmentally responsible approach to AI deployment.

Technical Innovations Driving SLM Performance

Advanced Training Techniques

Techniques like knowledge distillation transfer capabilities from large models to smaller ones, preserving performance while reducing size.

Better Data Curation

Researchers discovered that training on carefully curated, high-quality data produces better results than massive datasets with noise. Quality beats quantity.

Specialized Architectures

New model architectures optimize for efficiency without sacrificing capability. Innovations in attention mechanisms and layer design extract maximum performance from fewer parameters.

Quantization and Compression

Advanced compression techniques reduce model size by 50-75% with minimal quality loss, making deployment even more practical.

Real-World Success Stories

Microsoft's Phi-3 Family

Microsoft's Phi-3 models demonstrate that 3-7B parameter models can match GPT-3.5 performance on many tasks, running efficiently on smartphones and laptops.

Google's Gemma Series

Google's Gemma models provide state-of-the-art performance in compact packages, enabling developers to build sophisticated applications without enterprise infrastructure.

Meta's Llama 3 8B

Llama 3 8B delivers impressive reasoning and coding capabilities while running smoothly on consumer hardware, making advanced AI accessible to individual developers.

Industry Adoption Trends

Startups Choosing Small Models

New AI companies are building products around small models from day one, prioritizing speed, cost, and privacy over raw capability they don't need.

Enterprise Hybrid Strategies

Large organizations use small models for routine tasks and large models only when necessary, optimizing costs while maintaining quality.

Mobile AI Explosion

Smartphone manufacturers are integrating small language models directly into devices, enabling AI features that work offline and protect privacy.

The Right Model for the Right Task

The future isn't about choosing between small and large models—it's about matching model size to task requirements:

- Small models (1-7B): Chat, summarization, simple coding, content generation

- Medium models (10-30B): Complex reasoning, advanced coding, technical writing

- Large models (70B+): Cutting-edge research, extremely complex tasks, specialized domains

For most applications, small models provide the optimal balance of performance, cost, and practicality.

What This Means for You

The small model revolution democratizes AI:

- Build AI features without expensive infrastructure

- Deploy applications that work offline

- Iterate quickly with fast, responsive models

- Reduce costs while maintaining quality

Efficiency isn't just a technical achievement—it's making AI accessible to everyone.

Harness the power of efficient small language models with TernBase. Run fast, capable models locally on your Mac.